Image source: Model Council AI

I built Model Council AI because I kept using AI for serious technical and product decisions, but I did not want to rely on one confident answer from one model.

That is the core problem.

AI models are useful. They can explain trade-offs, review architecture, critique product ideas, and help reason through strategy. But a single model can sound clear, logical, and convincing while still missing an important assumption, risk, or operational detail.

For small tasks, that may be fine.

For serious decisions, I wanted more.

I wanted disagreement. I wanted critique. I wanted to see which assumptions survived when multiple models looked at the same question independently.

That is why I built Model Council AI.

· · ·

One confident AI answer is not enough

As a developer and DevOps engineer, I often use AI to think through questions where the answer is not obvious:

- Should this product use serverless or containers?

- Is Kubernetes worth it for this workload and team?

- Which database is safest for this architecture?

- Should a pricing experiment be shipped, delayed, or killed?

- What is the best incident response plan for a business-critical application?

- Should this roadmap bet be cut, delayed, or doubled down?

These are not simple prompt-and-answer questions.

They involve cost, reliability, team capability, operational complexity, security, future scale, time to market, and risk.

One model may notice cost.

Another may notice operational burden.

Another may challenge the product assumption.

Another may be wrong, but wrong in a useful way because it exposes a path I had not considered.

The useful part is not that every model agrees. The useful part is that the reasoning becomes easier to inspect.

· · ·

The manual workflow I kept repeating

Before building this, I was already running a manual version of the workflow.

I would ask the same question across multiple AI models. Then I would compare the answers myself.

Where do they agree?

Where do they disagree?

Which answer is too shallow?

Which one notices the risk?

Which assumptions are hidden?

Which recommendation still makes sense after criticism?

That process was useful, but messy.

It meant multiple browser tabs, slightly different prompts, different answer formats, and no clean synthesis at the end. The value was there, but the workflow was not.

Model Council AI is my attempt to make that process structured.

· · ·

What Model Council AI does

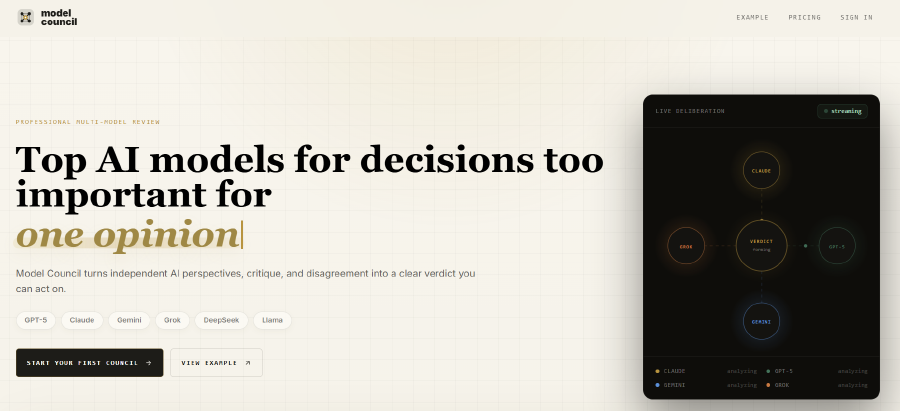

Model Council AI takes one hard question and runs it through a council-style workflow.

The flow is simple:

- You ask one question.

- Multiple AI models answer independently.

- The models critique each other.

- A final synthesis produces one structured verdict.

The final output is designed to show:

- Agreement level

- Disagreement points

- Confidence

- Reasoning notes

- Final recommendation

The goal is not to replace human judgment.

The goal is to make the reasoning process easier to inspect before you make a decision.

· · ·

A simple example

Take a question like:

Should this product use serverless or containers?

If I ask one model, I may get a polished answer that recommends one path.

With Model Council AI, the same question is sent through multiple independent perspectives. One model may favor serverless because it reduces early operational overhead. Another may prefer containers because the workload needs predictable runtime behavior. Another may focus on team experience, deployment maturity, or cost at scale.

Then the models critique each other.

The final verdict should not just say “use serverless” or “use containers.” It should explain where the models agreed, where they disagreed, what assumptions matter, and which recommendation looks strongest under the current constraints.

That is the difference I wanted.

Not just an answer.

A better view of the decision.

· · ·

Consensus is not truth

This part matters.

Model Council AI is not a source of truth.

Models can agree and still be wrong. A confident final verdict can still miss context. A majority view can still depend on a weak assumption.

So I do not see this as decision automation.

I see it as decision support.

The useful part is seeing:

- Where models agree

- Where they disagree

- What assumptions they make

- Which trade-offs they prioritize

- Which risks appear across multiple answers

- Which arguments survive critique

For technical, product, architecture, and strategy decisions, that can be valuable.

· · ·

Who it is for

I built Model Council AI for people who use AI seriously in their work.

That includes:

- Developers

- DevOps engineers

- Platform engineers

- Architects

- Founders

- Indie hackers

- Researchers and writers

- AI power users

It is especially useful when the question is not just “give me an answer”, but “help me reason through this decision.”

· · ·

What is live today

The first version is live now.

It includes:

- Free hosted council runs

- Lite, Balanced, and Premium presets

- Independent model answers

- Cross-model critique

- Synthesized final verdicts

- Agreement and disagreement analysis

- Confidence and reasoning notes

- Markdown export

- PDF export

- Auth

- 30-day history

- Paid OpenRouter-key mode for custom model usage

There is a free hosted trial so you can test it without setting anything up.

Paid options are available for people who want more usage, exports, history downloads, or OpenRouter-key mode for custom model usage.

· · ·

What I want to learn next

This is the first public version, and the part I care about now is usage.

I want to see what kinds of decisions people actually run through it.

I want to learn:

- Where the council output helps

- Where the workflow feels too slow

- Where the result feels too complex

- Which decisions are a good fit

- Which decisions are a bad fit

- What would make it useful enough to keep using

The product will get better from real questions, not imagined ones.

· · ·

Try it

Model Council AI is live here:

If you try it, send me one hard technical, product, architecture, research, or strategy question you would run through a model council.

I am interested in seeing which decisions people are willing to test with multiple models instead of relying on one confident answer.